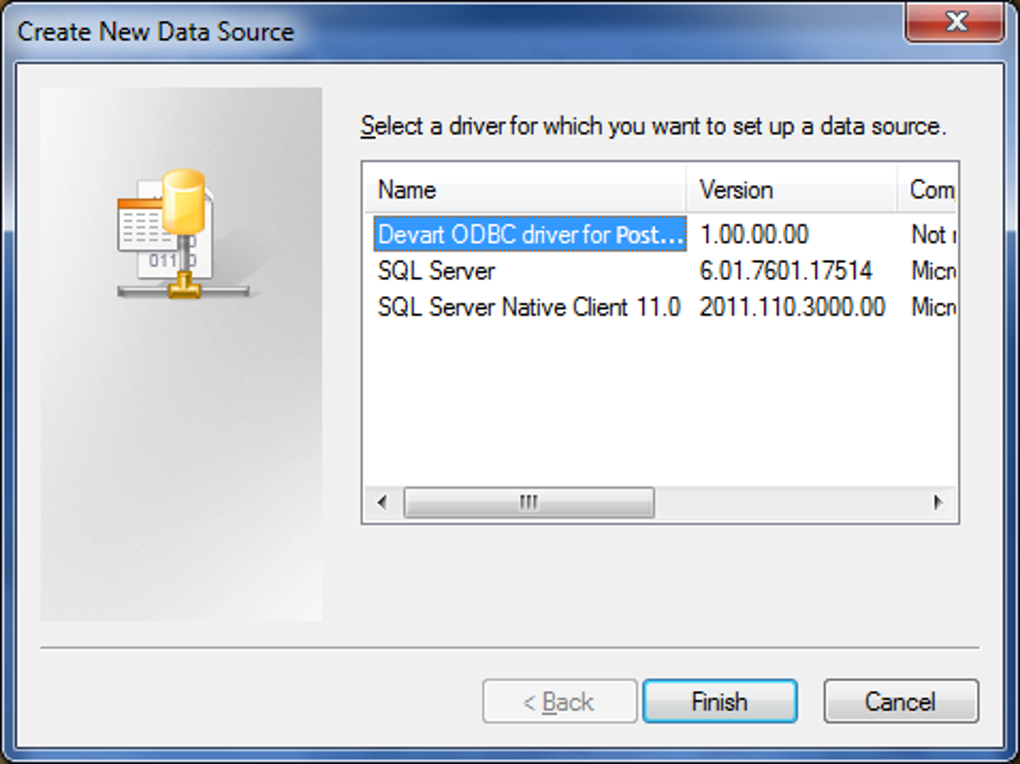

Remote_table.createOrReplaceTempView ( "SAMPLE_VIEW" ) If you want to process data with Databricks SparkSQL, register the loaded data as a Temp View. Step 3: Checking the resultĭisplay (remote_lect ("ShipName")) Remote_table = ( "jdbc" ) \Ĭheck the loaded PostgreSQL data by calling the display function. Once you configure the connection, you can load PostgreSQL data as a dataframe using the CData JDBC Driver and the connection information. If the Database property is not specified, the data provider connects to the user's default database. To connect to PostgreSQL, set the Server, Port (the default port is 5432), and Database connection properties and set the User and Password you wish to use to authenticate to the server. Either double-click the JAR file or execute the jar file from the command-line.įill in the connection properties and copy the connection string to the clipboard. User=postgres Password=admin Database=postgres Server=127.0.0.1 Port=5432 "įor assistance in constructing the JDBC URL, use the connection string designer built into the PostgreSQL JDBC Driver.

Step 1: Connection Informationĭriver = "" You can view the licensing file included in the installation for information on how to set this property. Additionally, you will need to set the RTK property in the JDBC URL (unless you are using a Beta driver). Configure the Connection to PostgreSQLĬonnect to PostgreSQL by referencing the JDBC Driver class and constructing a connection string to use in the JDBC URL. When the notebook launches, we can configure the connection, query PostgreSQL, and create a basic report. Name the notebook, select Python as the language (though Scala is available as well), and choose the cluster where you installed the JDBC driver. Start by creating a new notebook in your workspace. With the JAR file installed, we are ready to work with live PostgreSQL data in Databricks.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed